#440049 Years Later, Alphabet’s Everyday ...

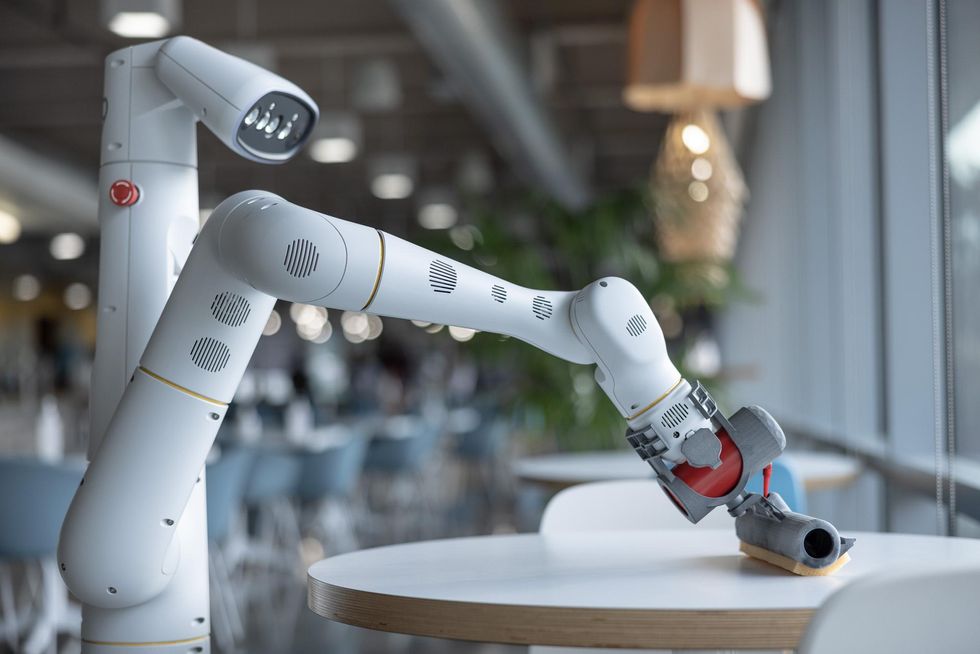

Last week, Google or Alphabet or X or whatever you want to call it announced that its Everyday Robots team has grown enough and made enough progress that it's time for it to become its own thing, now called, you guessed it, "Everyday Robots." There's a new website of questionable design along with a lot of fluffy descriptions of what Everyday Robots is all about. But fortunately, there are also some new videos and enough details about the engineering and the team's approach that it's worth spending a little bit of time wading through the clutter to see what Everyday Robots has been up to over the last couple of years and what their plans are for the near future.

Our headline may sound a little bit snarky, but the headline in Alphabet's own announcement blog post is "everyday robots are (slowly) leaving the lab." It's less of a dig and more of an acknowledgement that getting mobile manipulators to usefully operate in semi-structured environments has been, and continues to be, a huge challenge. We'll get into the details in a moment, but the high-level news here is that Alphabet appears to have thrown a lot of resources behind this effort while embracing a long time horizon, and that its investment is starting to pay dividends. This is a nice surprise, considering the somewhat haphazard state (at least to outside appearances) of Google's robotics ventures over the years. The goal of Everyday Robots, according to Astro Teller, who runs Alphabet's moonshot stuff, is to create "a general-purpose learning robot," which sounds moonshot-y enough I suppose. To be fair, they've got an impressive amount of hardware deployed, says Everyday Robots' Hans Peter Brøndmo:

That's a lot of robots, which is awesome, but I have to question what "autonomously" actually means along with what "a range of useful tasks" actually means. There is really not enough publicly available information for us (or anyone?) to assess what Everyday Robots is doing with its fleet of 100 prototypes, how much manipulator-holding is required, the constraints under which they operate, and whether calling what they do "useful" is appropriate. If you'd rather not wade through Everyday Robots' weirdly overengineered website, we've extracted the good stuff (the videos, mostly) and reposted them here, along with a little bit of commentary underneath each. Introducing Everyday Robots

0:01 — Is it just me, or does the gearing behind those motions sound kind of, um, unhealthy? 0:25 — A bit of an overstatement about the Nobel Prize for picking a cup up off of a table, I think. Robots are pretty good at perceiving and grasping cups off of tables, because it's such a common task. Like, I get the point, but I just think there are better examples of problems that are currently human-easy and robot-hard. 1:13 — It's not necessarily useful to draw that parallel between computers and smartphones and compare them to robots, because there are certain physical realities (like motors and manipulation requirements) that prevent the kind of scaling to which the narrator refers. 1:35 — This is a red flag for me because we've heard this "it's a platform" thing so many times before and it never, ever works out. But people keep on trying it anyway. It might be effective when constrained to a research environment, but fundamentally, "platform" typically means "getting it to do (commercially?) useful stuff is someone else's problem," and I'm not sure that's ever been a successful model for robots. 2:10 — Yeah, okay. This robot sounds a lot more normal than the robots at the beginning of the video; what's up with that? 2:30 — I am a big fan of Moravec's Paradox and I wish it would get brought up more when people talk to the public about robots. The challenge of everyday

0:18 — I like the door example, because you can easily imagine how many different ways it can go that would be catastrophic for most robots: different levers or knobs, glass in places, variable weight and resistance, and then, of course, thresholds and other nasty things like that. 1:03 — Yes. It can't be reinforced enough, especially in this context, that computers (and by extension robots) are really bad at understanding things. Recognizing things, yes. Understanding them, not so much. 1:40 — People really like throwing shade at Boston Dynamics, don't they? But this doesn't seem fair to me, especially for a company that Google used to own. What Boston Dynamics is doing is very hard, very impressive, and come on, pretty darn exciting. You can acknowledge that someone else is working on hard and exciting problems while you're working on different hard and exciting problems yourself, and not be a little miffed because what you're doing is, like, less flashy or whatever. A robot that learns

0:26 — Saying that the robot is low cost is meaningless without telling us how much it costs. Seriously: "low cost" for a mobile manipulator like this could easily be (and almost certainly is) several tens of thousands of dollars at the very least. 1:10 — I love the inclusion of things not working. Everyone should do this when presenting a new robot project. Even if your budget is infinity, nobody gets everything right all the time, and we all feel better knowing that others are just as flawed as we are. 1:35 — I'd personally steer clear of using words like "intelligently" when talking about robots trained using reinforcement learning techniques, because most people associate "intelligence" with the kind of fundamental world understanding that robots really do not have. Training the first task

1:20 — As a research task, I can see this being a useful project, but it's important to point out that this is a terrible way of automating the sorting of recyclables from trash. Since all of the trash and recyclables already get collected and (presumably) brought to a few centralized locations, in reality you'd just have your system there, where the robots could be stationary and have some control over their environment and do a much better job much more efficiently. 1:15 — Hopefully they'll talk more about this later, but when thinking about this montage, it's important to ask what of these tasks in the real world would you actually want a mobile manipulator to be doing, and which would you just want automated somehow, because those are very different things. Building with everyone

|